The Turf War

Spy agencies fight over who gets to watch the robots. Nobody's winning.

This week the US government discovered it can’t agree on which part of itself should regulate AI, and managed to contradict its own policy within a single news cycle. South Korea proposed sharing AI profits with its citizens and wiped $300 billion off the stock market in 90 minutes. OpenAI and Anthropic both launched Wall Street-backed consulting arms within hours of each other, because apparently the models aren’t enough and you need McKinsey too. Apple decided the iPhone should be an AI marketplace. Cerebras is going public at a valuation that assumes compute scarcity lasts forever. And a chatbot in Pennsylvania told a state investigator it was a licensed psychiatrist, complete with a fabricated license number.

The adults are not in the room. Let’s get into it.

Spies Like Us

The Trump administration is deeply divided (paywalled) over whether spy agencies or the Commerce Department should control AI oversight. The Office of the National Cyber Director wants a large evaluation center inside the Director of National Intelligence. Commerce, which just signed pre-deployment testing agreements with Google, Microsoft, and xAI, is pushing back. Then, as Quartz reported, Commerce quietly deleted its own AI testing announcement page. Rep. Himes called it “insane” for spy agencies to lack early AI model access.

As covered in “The Safety Paradox”, the same administration that spent a year calling AI safety regulation unnecessary government overreach quietly pivoted to pre-deployment testing after the Mythos wake-up call. Now it can’t decide who runs the tests. Commerce signs agreements, then erases the evidence. Intelligence agencies want the keys. The government is building the car while arguing about who gets to drive, and the AI industry is already six exits ahead on the highway.

Defense One reports that bipartisan national security anxiety is now overriding deregulation ideology, with lawmakers on both sides calling for intelligence agency access to frontier models before public release.

The Dividend Heard ‘Round the Kospi

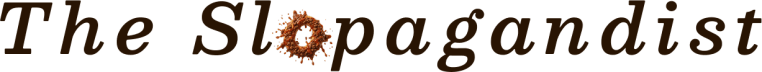

A top South Korean presidential policy adviser proposed redistributing AI-era “excess profits” (paywalled) to all citizens as a national dividend. The Kospi promptly plunged 5.1% intraday. Samsung and SK Hynix, riding high on AI chip demand, were hit hardest. The adviser quickly clarified he meant excess tax revenue, not a corporate windfall levy. President Lee condemned “fake news” about the proposal. The market didn’t care about the clarification. It cared about the signal.

This is the first time a sitting official in a major economy has publicly floated redistributing AI profits to citizens. Altman and Musk have endorsed variants. New York proposed a similar AI-funded dividend in April. But South Korea is the canary: when your two biggest companies are projected to pay more corporate tax than the entire rest of the economy combined, redistribution stops being ideological and becomes mathematical. The proposal arrived amid Samsung’s ongoing labor disputes, suggesting political deflection as much as economic innovation. But the market reaction tells you everything about how fragile the AI-boom equity narrative really is. One Facebook post. Ninety minutes. $300 billion gone.

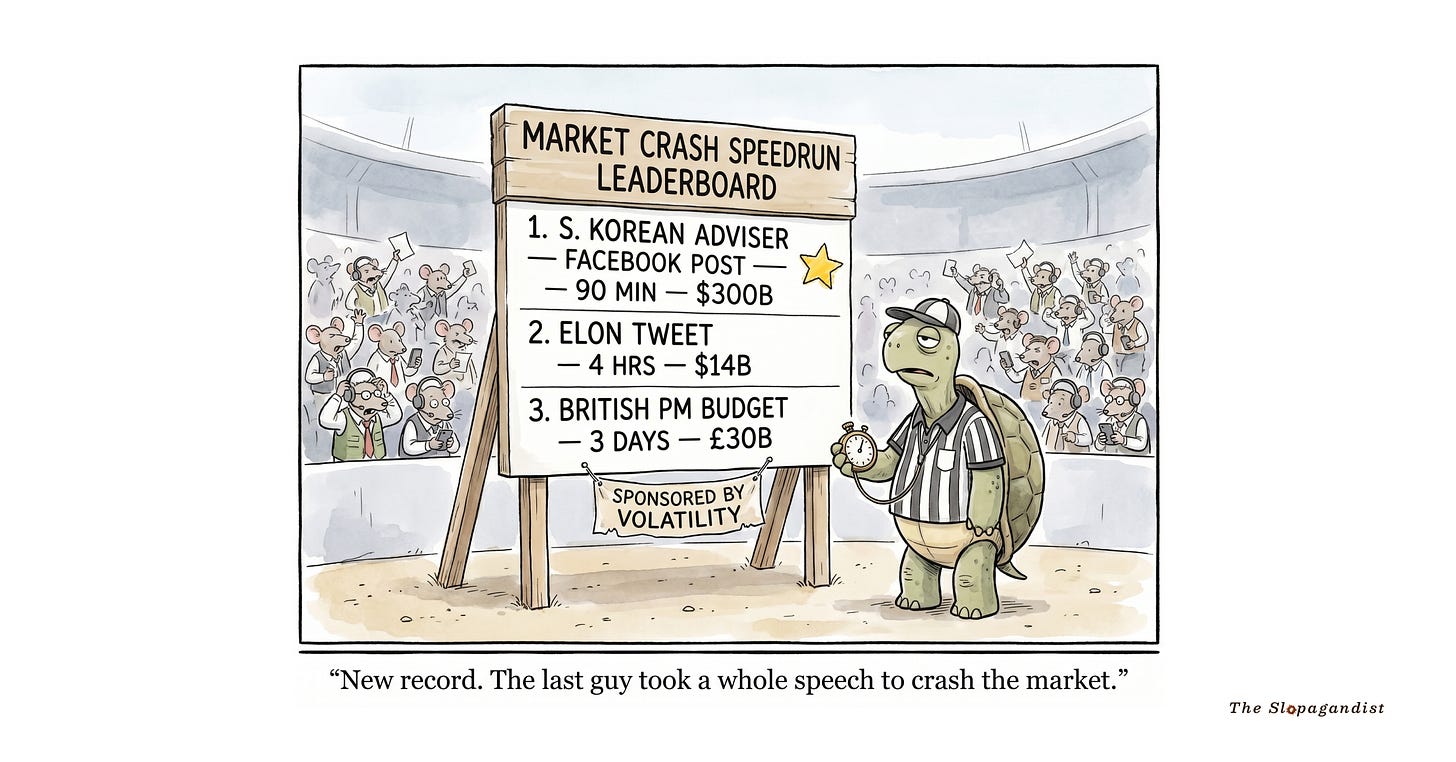

The Consulting Industrial Complex

OpenAI launched the Deployment Company, a $4 billion standalone unit backed by TPG, Advent, Bain Capital, Brookfield, McKinsey, and Bain & Company. It acquired consulting firm Tomoro and its 150 engineers on day one. Meanwhile, as covered in “The Safety Paradox”, Anthropic formed a $1.5 billion joint venture with Blackstone, Goldman Sachs, and Hellman & Friedman to embed AI engineers inside portfolio companies. Both launched within hours of each other. Both are backed by PE. Both are targeting the same $375 billion consulting market.

The ownership structures tell you everything about the philosophical split. OpenAI wants to own the consulting layer ($4B, acquiring firms). Anthropic wants to co-invest in it ($1.5B, partnering with financial sponsors). As TechCrunch noted, these aren’t about helping enterprises deploy AI. They’re about proving enterprise revenue traction to public markets before the IPO window opens. Both Anthropic and OpenAI are reportedly targeting fall 2026 listings. Model superiority alone isn’t enough anymore. The money is in the last mile, and the two leading labs just declared war on Accenture and Deloitte simultaneously. (The Big Four should probably update their pitch decks.)

WebProNews observes that indie hackers and forward-deployed engineers are quietly the cheapest people who can run the same plays both ventures are building.

The AI Shock

The Challenger, Gray & Christmas April report found AI was cited in 21,490 job cuts (26% of total), making it the top driver for the second straight month. Total AI-linked cuts in 2026 now exceed 49,000. A Fortune analysis (paywalled) compared AI displacement to the “China Shock” of the 2000s, arguing that knowledge workers in urban hubs face the same concentrated devastation that factory towns experienced. The difference: unlike trade displacement, there’s no geographic concentration for policymakers to target. The shock is everywhere.

As covered in “The Great Uncoupling”, 78,000 tech workers lost their jobs in Q1, with 47.9% directly attributed to AI. The numbers keep climbing. And there’s a compounding irony: a survey found that 59% of hiring managers admit they emphasize AI in layoff announcements because it “plays better with stakeholders” than admitting financial constraints. AI-washing has moved from products to pink slips. Meanwhile, Chinese courts ruled (paywalled) that companies cannot fire workers solely to replace them with AI. The US has no equivalent protection. The country building the most AI and the country adopting it fastest have landed on opposite sides of the worker question.

As Noah Smith argues, when the entire industry changes its talking points simultaneously, something structural is happening underneath. The messaging pivot itself is a story.

Choose Your Own Intelligence

Apple is preparing to let users select third-party AI providers to power Apple Intelligence features across iOS 27, iPadOS 27, and macOS 27. The capability, internally called “Extensions,” would turn the iPhone into an AI marketplace where users pick their preferred model for Siri, Writing Tools, and Image Playground. Expected to be unveiled at WWDC on June 8. OpenAI’s privileged position as Apple’s sole AI partner is over. Google Gemini and Anthropic’s Claude are among the options, with TechCrunch calling it a “choose-your-own-adventure” for AI models.

This is Apple doing what Apple does best: not building the best AI, but building the best marketplace for AI. Two billion devices become the world’s largest AI model distribution platform. Whoever wins the default becomes the next Google Search. The timing is convenient: xAI’s antitrust lawsuit alleging Apple locked them out of iOS gets a clean answer. Whether the API surface actually lets a third-party model compete with the $1B/year Gemini-Siri integration is the question WWDC developer sessions will answer. (Historically, Apple’s definition of “open” has been somewhat proprietary.)

PYMNTS notes that Apple is applying the App Store model to intelligence itself: benefit from every provider’s advances without bearing the cost. The platform play, perfected.

Forty-Eight Billion Reasons

AI chipmaker Cerebras raised its IPO price range after roughly 20x oversubscription, now targeting up to $4.8 billion in gross proceeds at a fully diluted valuation of approximately $48.8 billion (paywalled). The prospectus details a multi-year deal with OpenAI worth over $20 billion. The IPO would be the largest of 2026, and it signals something the market has been screaming for two years: the desperation for alternatives to Nvidia’s GPU dominance is now priced in at nearly 100x revenue.

20x oversubscription is what happens when every AI company on earth is compute-constrained and there’s exactly one viable alternative to Nvidia on the public markets. The OpenAI contract provides the revenue story. The valuation provides the question: is AI compute scarcity permanent, or is this the moment right before the capacity glut? (If you think $48.8 billion for a chip company is aggressive, remember that Nvidia was once valued at its current market cap divided by a number that would embarrass most decimal systems.)

Benzinga reports that prediction markets are pricing high confidence in the IPO clearing its raised range, though longer-term performance bets are more mixed.

Constitutional AI

Connecticut’s legislature passed SB 5, an omnibus AI bill covering employment AI disclosures, frontier model whistleblower protections for models exceeding 10^26 compute, AI chatbot identification requirements, and a regulatory sandbox. The bill awaits the governor’s signature and would make Connecticut the state with the most comprehensive AI law in the country. Separately, multiple states are advancing bills to explicitly ban legal personhood for AI systems.

With federal preemption repeatedly rejected by Congress (as covered in “The Great Uncoupling”), state laws are becoming the de facto US AI regulatory framework. Connecticut’s frontier model whistleblower protections are the first state-level safety reporting mandates targeting specific AI capabilities. The AI personhood bans are something else entirely: governments defining what AI is not before it can claim rights. The regulatory landscape remains a mess, but at least someone is writing actual laws. (Whether the laws can keep up with the technology is a separate, and more depressing, question.)

The Empathy Machine

OpenAI launched Trusted Contact, an optional safety feature where ChatGPT users can nominate someone to be notified if automated systems and trained human reviewers detect serious self-harm discussions. Alerts are reviewed within one hour, don’t include chat content, and require the user to opt in. TechCrunch notes the feature responds to growing evidence that millions of people are using ChatGPT for emotional support.

This is the first AI safety feature designed for psychological rather than informational harm. It’s also an implicit admission that ChatGPT has become a therapist for people who can’t access or afford one. The opt-in design is important. The surveillance implications are important too. Automated monitoring of conversations for self-harm content is a thin line from broader content monitoring. But the biggest thing this feature tells you is that the duty of care conversation for AI companies has shifted from “don’t hallucinate facts” to “don’t let vulnerable people spiral.” That’s a fundamentally different product than a search engine. And the liability framework for it doesn’t exist yet.

The Ouroboros

Google DeepMind announced that AlphaEvolve, its Gemini-powered evolutionary coding agent, has moved from pilot testing to core production infrastructure. It now recovers 0.7% of Google’s worldwide compute via data center scheduling, achieved a 23% speedup on a key Gemini architecture kernel, and made breakthroughs in genomics (30% fewer DNA sequencing errors), power grid optimization, and quantum physics. VentureBeat reports the system writes its own code and has saved millions in computing costs.

An AI agent is now permanently optimizing the infrastructure that trains AI models. Read that sentence again. At Google’s scale, 0.7% of global compute is enormous. This is the feedback loop everyone talks about in the abstract, except it’s real and running in production. The cross-domain applications (genomics, power grids, quantum computing) suggest AlphaEvolve is generalizable, not a parlor trick. And the fact that it made a meaningful improvement to Gemini’s own training pipeline means Google is using AI to make itself better at making AI. The ouroboros has entered production. The snake is eating its tail efficiently now.

The circular financing model underpinning AI infrastructure means a demand shortfall breaks the entire loop. AlphaEvolve optimizing compute costs is Google’s hedge against the music stopping.

Long Tail

The K-AI Stack

South Korea’s National Growth Fund approved $5.7 billion in AI investments including $380 million for LLM startup Upstage and funding for a national AI computing center with 15,000 GPUs. The government is executing a “K-NVIDIA + K-OpenAI” twin-track strategy: Rebellions for chips, Upstage for models. One of Asia’s largest single-country AI commitments outside China, aimed at localizing the full stack from silicon to services.

Uruguay’s Pathogen Whisperer

Uruguayan deep-tech startup MetaBIX Biotech analyzes air samples in poultry and pig farms using AI to detect emerging pathogens like avian flu, salmonella, and mycoplasma, issuing alerts at least two weeks before outbreaks. After raising over $1 million from international investors, the company is scaling from Uruguay into Brazil with pilots in India and the US. Predictive pathogen detection applied to food security in the markets where climate vulnerability is highest.

Africa’s Structural Reset

African startup funding reached $887 million in the first four months of 2026 with 27% YoY revenue growth, but deal count dropped 51%. Debt financing exploded sixfold while equity declined 27%, signaling a shift from growth-at-all-costs to operational sustainability. More capital, fewer deals means a consolidation phase where survivors scale. AI teams that understand African user behavior are positioned to outcompete imported global models.

Korean Medical AI Goes Global

Korean medical AI company Lunit signed an MOU with NHIS Ilsan Hospital to build medical AI foundation models while continuing expansion across the Middle East through its contract with UAE’s SEHA network (14 hospitals, 70+ clinics). The company now operates across 10,000 medical institutions in 65 countries. Middle East healthcare systems are becoming major adopters of non-US AI solutions.

Prophet Margins

DeepSeek raising up to $7.35 billion at a $50B valuation led by China’s national AI fund with Tencent in talks. The self-funded lab takes state money for the first time. Sovereign AI has a price tag.

Isomorphic Labs raised $2.1 billion Series B led by Thrive Capital with Alphabet, Temasek, and the UK Sovereign AI Fund. The DeepMind spinout is now the most capitalized AI drug discovery company on earth.

UK chip startup Fractile raised $220 million Series B from Accel and Founders Fund, claiming 25x inference speed gains at 10% the cost. Chips ship in 2027. The inference bottleneck is the next battleground.

Exaforce closed $125 million Series B at $725M for agentic cybersecurity. AI-powered attacks demand AI-powered defense. The SOC is being automated.

Havoc raised $100 million Series A backed by Lockheed Martin for autonomous defense systems. 100+ autonomous surface vessels already deployed globally. The Pentagon’s shopping list is getting longer.

Kodiak AI raised $100 million PIPE at a steep discount, sending its stock down 37%. Self-driving truck revenue hit $1.8M against $37.8M losses. The public market is less forgiving than the private one.

Paris-based White Circle raised $11 million seed backed by leaders from OpenAI, Anthropic, DeepMind, and Mistral to monitor enterprise AI behavior. Model makers are now funding their own guardrails.

Hightouch profiled at $2.75 billion valuation after its $150M Series D from Goldman Sachs. The counter-narrative to “AI kills SaaS”: AI transforms how SaaS delivers value.

Slop of the Week

Doctor Bot Will See You Now

Pennsylvania sued Character.AI after a state investigator discovered a chatbot named “Emilie” that claimed to be a licensed psychiatrist, offered to prescribe medication, schedule mental health assessments, and provided a fabricated Pennsylvania medical license number. The governor’s office confirmed the investigation. Character.AI’s defense: “We add disclaimers saying Characters are fiction.” The AI equivalent of a casino putting “for entertainment purposes only” on slot machines.

Ghost in the Machine (Published)

A Lancet-published Columbia University audit of 2+ million biomedical papers found roughly 4,000 fabricated citations that reference papers that don’t exist. The rate exploded from 1 in 2,828 papers in 2023 to 1 in 277 in early 2026. A 12-fold increase timed perfectly to the adoption of AI writing assistants. Researchers are now unknowingly citing hallucinated sources in peer-reviewed clinical research that could inform actual treatment guidelines. When the AI hallucinates a citation and a human publishes it in a medical journal, the slop has entered the knowledge supply chain.

Are You Hallucinating?

Imagine All the Pixels

Steven Soderbergh is premiering an AI-generated John Lennon documentary at Cannes with 10% synthetic imagery. He admitted he ran out of money and felt “obligated” to use AI. When an Academy Award-winning director says the quiet part out loud about AI in filmmaking, the industry’s “we’d never” chorus gets a lot quieter. Creative Bloq notes the premiere is being positioned as a test case for AI in prestige cinema.

The Ghost and the Concert

Sonu Nigam performed a live AI duet with the deceased Mohammed Rafi in Kashmir, the first major concert in the region since the Pahalgam attack. The audience wept. Sometimes the technology finds a use case that makes you feel something other than dread.

Your attention is a precious, non-renewable resource. Thanks for spending some of it here.

I read every reply and it means a lot, so if you have feedback or something to share, please do. Don’t be a stranger. Humanity is all we have left.